After being chosen as a VMware vExpert for 2017 this month, I was inspired to get working on refreshing my vSphere “homelab” environment despite a busy travel month in late February/early March. This won’t be a deep technical dive into lab building; rather, I just wanted to share some ideas and adventures from my lab gear accumulation over the past year.

As a disclosure, while I do work for Cisco, my vExpert status and homelab building are at most peripherally-connected (the homelab at home connects to a Meraki switch whose license I get an employee discount on, for example). And even though I’m occasionally surprised when I use older higher end Dell or HP gear, it’s not a conflict of interest or an out-of-bounds effort. It’s just what I get a great deal on at local used hardware shops from time to time.

The legacy lab at Andromedary HQ

Also read: New Hardware thoughts for home labs (Winter 2013)

Stock Photo of a Dell C6100 chassis

During my last months at the Mickey Mouse Operation, I picked up a Dell C6100 chassis (dual twin-style Xeon blade-ish servers) with two XS23-TY3 servers inside. I put a Brocade BR-1020 dual-port 10GBE CNA in each, and cabled them to a Cisco Small Business SG500XG-8F8T 10 Gigabit switch. A standalone VMware instance on my HP Microserver N40L served the vCenter instance and some local storage. For shared storage, the Synology DS1513+ served for about two years before being moved back to my home office for maintenance.

The Dell boxes have been up for almost three years–not bad considering they share a 750VA “office” UPS with the Microserver and the 10Gig Switch and usually a monitor and occasionally an air cleaner. The Microserver was misbehaving, stuck on boot for who knows how long, but with a power cycle it came back up.

I will be upgrading these boxes to vSphere 6.5.0 in the next month, and replacing the NAS for shared storage throughout the workshop network.

The 2017 Lab Gear Upgrades

For 2017, two new instances are being deployed, and will probably run nested ESXi or a purpose-built single-server instance (i.e. an upcoming big data sandbox project). The two hardware instances each have a fair number of DIMM slots and more than one socket, and the initial purchase for each came in under US$200 before upgrades/population.

You may not be able to find these exact boxes on demand, but there are usually similar-scale machines available at Weird Stuff in Sunnyvale for well under $500. Mind you, maxing them out will require very skilled hunting or at least a four figure budget.

CPU/RAM cage in the HP Z800

First, the home box is a HP Z800 workstation. Originally a single processor E5530 workstation with 6GB RAM, I’ve upgraded it to dual E5645 processors (6-core 2.4GHz with 12MB SmartCache) and 192GB DDR3 ECC Registered RAM, replaced the 750GB spinning disk with a 500GB SSD, and added two 4TB SAS drives as secondary storage. I’ve put an Intel X520 single-port 10GbE card in, to connect to a SFP+ port on the Meraki MS42P switch at home, and there are two Gigabit Ethernet ports on the board.

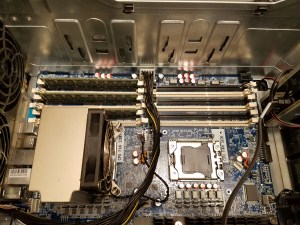

CPU/RAM cage in Intel R2208 chassis

And second, the new shop box is an Intel R2208LT2 server system. This is a 2RU four-socket E5-4600 v1/v2 server with 48 DIMM slots supporting up to 1.5TB of RAM, 8 2.5″ hotswap drive bays, and dual 10GbE on-board in the form of an X540 10GBase-T dual port controller. I bought the box with no CPUs or RAM, and have installed four E5-4640 (v1) processors and 32GB of RAM so far. There’s more to come, since 1GB/core seems a bit Spartan for this kind of server.

There’s a dual 10GbE SFP+ I/O module on its way, and this board can take two such modules (or dual 10GBase-T or quad Gigabit Ethernet or single/dual Infiniband FDR interfaces).

The Z800 is an impressively quiet system–the fans on my Dell XPS 15 laptops run louder than the Z800 under modest use. But by comparison, the Intel R2208LT2 sounds like a Sun Enterprise 450 server when it starts up… 11 high speed fans warming up for POST can be pretty noisy.

So where do we go from here?

Travel and speaking engagements are starting to pick up a bit, but I’ve been putting some weekend time in between trips to get things going. Deploying vSphere 6.x on the legacy lab as well as the new machines, and setting up the SAN and DR/BC gear, will be spring priorities, and we’ll probably be getting rid of some of the older gear (upgrading the standalone vCenter box from N40L to N54L for example, or perhaps moving it to one of the older NUCs to save space and power).

I also have some more tiny form factor machines to plug in and write up–my theory is that there should be no reason you can’t carry a vSphere system anywhere you go, with a budget not too far above a regular-processor-endowed laptop. And if you have the time and energy, you can do a monster system for less than a high-end ultrabook.

Disclosure: Links to non-current products are eBay Partner Network links; links to current products are Amazon affiliate links. In either case, if you purchase through links on this post, we may receive a small commission to pour back into the lab.

Pingback: First look: Checking out the “antsle” personal cloud server | rsts11 – Robert Novak on system administration